BEQI: Open-Source Forex Broker Execution Audit Toolkit 04/05/2026 – Posted in: Arbitrage Software

BEQI: An Open-Source Toolkit to Audit Any Forex Broker's Execution Quality

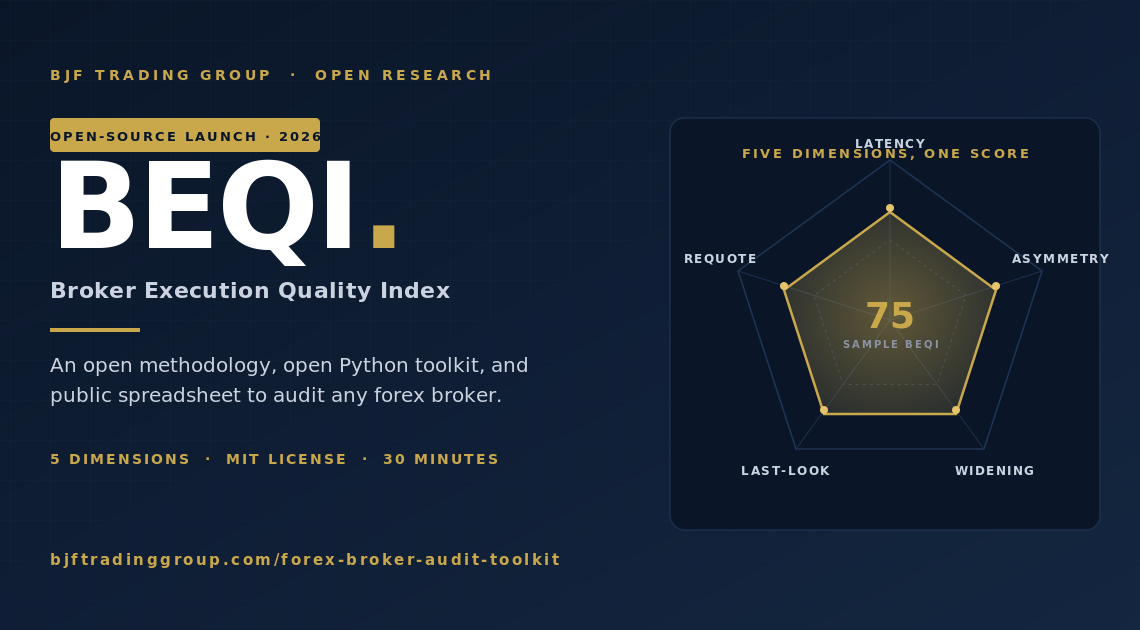

Retail forex brokers do not publish execution quality data. There is no public benchmark for matching latency, slippage asymmetry, spread widening, or last-look hold time. We're changing that. BEQI — the Broker Execution Quality Index — is an open methodology, an open Python toolkit, and a public spreadsheet anyone can contribute to. Measure your own broker in 30 minutes; the data accumulates into a benchmark.

Why a public broker audit didn't exist before

Retail forex execution is the most opaque layer of the trading stack. Spreads are advertised. Commission is published. Everything between order send and confirmed fill — matching latency, slippage symmetry, last-look hold, requote frequency, spread widening at signal moments — is invisible to the trader and unpublished by the broker. Strategy backtests assume zero-latency fills (a problem we documented in detail in Why Latency Arbitrage Backtests Don't Survive in Production). Broker reviews score on UX and withdrawals. Nobody measures the variables that actually decide whether a strategy survives in production.

BEQI fixes one specific problem: there's no public methodology, no shared toolkit, and no benchmark dataset for retail forex execution quality. We've been measuring this internally at BJF Trading Group for years to build broker dialect support into SharpTrader Pro and SharpTrader Optimizer. Now we're open-sourcing the methodology and the code.

The point is not to publish a leaderboard naming brokers (that's a legal minefield and would compromise the integrity of the data). The point is to give every trader, quant, and broker-tech researcher the same standardized way to measure, the same Python scripts to run, and a public place to deposit anonymized results. Over months, the spreadsheet becomes a benchmark. Anyone can replicate the methodology, dispute it, or extend it.

The BEQI thesis in one sentence

If 10,000 traders measure their broker's execution quality with the same open methodology, the result is a public benchmark that no individual broker can deny, no review site can fake, and every strategy developer can use to allocate capital with eyes open.

The five BEQI dimensions

Execution quality is not a single number. BEQI decomposes it into five orthogonal dimensions, each independently measurable from a trading statement (and, for two of them, from a tick stream paired with the statement):

| BEQI dimension | What it measures | Unit | Source | Better is… |

|---|---|---|---|---|

| 1. Matching latency | Median time from order send to confirmed fill | milliseconds | Statement timestamps + send-time log | Lower |

| 2. Slippage asymmetry | Ratio of negative-slippage fills to positive-slippage fills | ratio (1.0 = symmetric, >1.0 = against trader) | Statement: requested vs filled price | Closer to 1.0 |

| 3. Spread widening factor | Spread expansion at signal moments vs baseline | multiplier (1.0 = no widening, 3.0 = 3x widening) | Tick stream around order timestamps | Closer to 1.0 |

| 4. Last-look hold time | Median delay between matched timestamp and confirmation | milliseconds | FIX log or extended statement | Lower / zero |

| 5. Requote rate | Requotes per 100 orders | count per 100 | Statement requote events | Lower / zero |

Each dimension is independently meaningful. A broker can have low matching latency but high slippage asymmetry, or vice versa — the combinations tell different stories about how the broker is actually behaving. The toolkit reports each dimension separately and a composite BEQI score (weighted) at the end.

How to run the audit on your own broker (30 minutes)

You need three things: (a) a trading statement with at least 200 fills covering at least one trading week, (b) Python 3.10+ on any machine, (c) 30 minutes. The toolkit handles the rest.

Step 1 — Export your statement

The toolkit accepts three input formats:

- Common terminal HTML statement (saved from your retail trading platform's “save as report” function) — most retail traders will use this

- FIX log (FIX 4.4 message dump) — for traders running through a FIX bridge or institutional connection

- CSV with columns

order_id, send_time, fill_time, requested_price, filled_price, side, volume— for everyone else, including custom logs

If your platform doesn't expose send-time (the moment your strategy decided to send the order), you can still measure dimensions 2 and 5 (slippage asymmetry and requote rate) from a basic statement. Dimensions 1, 3, and 4 require a richer log.

Step 2 — Install and run

$ cd forex-broker-audit-toolkit

$ pip install -r requirements.txt

$ python beqi.py –input my_statement.html –tick-data eurusd_ticks.csv –output report.json

The toolkit auto-detects the input format. beqi.py dispatches to the per-dimension analyzers, prints a summary, and writes a JSON report you can submit to the public spreadsheet.

Step 3 — Review the report

The report includes the five dimension scores, the composite BEQI, sample-size diagnostics (lower N = lower confidence), and a tier classification (1 through 4, see below). Run it on more data to tighten the confidence intervals.

Anonymity by default

The toolkit never sees your account number, account balance, P&L, or position sizes. It only consumes timestamps, prices, requested-vs-filled deltas, and event types. The output JSON contains the BEQI scores, sample size, currency pairs covered, and time period — nothing identifying. You can submit it to the public spreadsheet anonymously.

Reading your results: the four BEQI tiers

Based on internal measurements across the brokers SharpTrader Pro supports, here are the empirical tiers. These are starting baselines — the public spreadsheet will refine them over time.

Tier 1 · Institutional ECN

BEQI 85–100

- Matching latency: 5–15 ms

- Slippage asymmetry: 1.0–1.05

- Spread widening: 1.0–1.3x

- Last-look: 0–5 ms

- Requote rate: <0.5 / 100

Tier 2 · Fast retail / fast bridge

BEQI 65–85

- Matching latency: 30–80 ms

- Slippage asymmetry: 1.05–1.20

- Spread widening: 1.3–2.0x

- Last-look: 50–150 ms

- Requote rate: 0.5–2 / 100

Tier 3 · Standard retail

BEQI 40–65

- Matching latency: 80–200 ms

- Slippage asymmetry: 1.20–1.50

- Spread widening: 2.0–3.5x

- Last-look: 200–500 ms

- Requote rate: 2–5 / 100

Tier 4 · Problematic

BEQI 0–40

- Matching latency: 200+ ms

- Slippage asymmetry: 1.50+

- Spread widening: 3.5x+

- Last-look: 500+ ms

- Requote rate: 5+ / 100

Tier classification matters because the same trading strategy will produce wildly different live results across tiers. A latency arbitrage strategy that's profitable on Tier 1 collapses on Tier 3. A trend-following strategy that holds positions for hours is roughly indifferent between Tier 1 and Tier 3 but suffers visibly on Tier 4. Knowing your broker's tier before you commit capital is the entire game.

The public BEQI spreadsheet

The spreadsheet is the long-term asset. Anyone can contribute one row per audit. Each row contains the five dimension scores, the composite, the sample size, the currency pairs covered, the time period, and (optionally, voluntarily) the broker name. We expect — and welcome — anonymous submissions where the broker is left blank; over time, even those produce useful aggregate distributions of execution quality across the retail forex industry.

Open in new tab →

To submit: run the toolkit, copy the JSON output, paste it into the submission form (link inside the sheet). New rows are reviewed for plausibility and accepted within 24 hours. We do not edit submitted scores; we may flag rows where sample size is <100 fills as low-confidence.

Methodology details (for reviewers and replicators)

The full mathematical specification is in METHODOLOGY.md in the repo, but the load-bearing decisions are:

1. Matching latency

Median (not mean) of fill_time − send_time. We use median because matching latency distributions are right-skewed by occasional 1–5 second outliers (network re-routing, broker GC pauses) that don't represent typical execution. We report median, p90, and p99 separately so the reader can see the tail.

2. Slippage asymmetry

For each fill, slippage is signed: positive means the trader got a better price than requested, negative means worse. Asymmetry = (count of negative slippage events) / (count of positive slippage events). A symmetric, neutral broker scores ~1.0. Above 1.2 indicates the broker's matching is biased against the trader — either by routing rules, by selective last-look, or by an asymmetric slippage engine.

3. Spread widening factor

For each order timestamp, we measure spread in a 50 ms window centered on the order vs spread in the 5-minute baseline window centered on the same hour-of-day across the prior week. The ratio is the widening factor. This requires a tick stream covering the audit period; the toolkit accepts BJF Feed CSVs and the standard tick-history format from common platforms.

4. Last-look hold time

Only measurable from a FIX log or an extended statement that exposes both the matched-but-pending state and the confirmed state. Standard retail HTML statements typically don't expose this, in which case the toolkit reports null for this dimension and the composite BEQI is computed from the remaining four. Last-look is one of several broker-side execution interference techniques — see our deep-dive on the seven anti-arbitrage broker plugins for the broader category.

5. Requote rate

Counted from explicit requote events in the statement. If the statement doesn't flag requotes (some terminals don't), the toolkit infers them by detecting send/cancel/resend triplets within a 200 ms window.

Composite BEQI

Each dimension is mapped to a 0–100 sub-score using the tier definitions above. The composite is a weighted geometric mean: BEQI = (L^0.30 × A^0.25 × W^0.20 × H^0.15 × R^0.10) where L, A, W, H, R are the five sub-scores. We use geometric mean rather than arithmetic so that a catastrophic score on any single dimension (e.g., last-look = 1500ms) cannot be hidden by good scores on others. Weights are debatable and explicitly published so reviewers can recompute under different weights from the raw sub-scores.

Limitations and disclosures

- Sample size dependency. A 50-fill audit is a vibe; a 500-fill audit is a measurement; a 5,000-fill audit is a benchmark. The toolkit reports sample-size confidence intervals; readers should weight low-N submissions accordingly.

- Time-of-day variance. The same broker can post Tier 2 results during London open and Tier 3 during Asia rollover. Audits should specify the time window.

- Account-level differences. Brokers can apply different routing logic per-account based on profile, balance, and trading pattern. One trader's audit may not generalize to another's on the same nominal broker. For broker-selection guidance independent of execution audit, see our forex arbitrage brokers guide.

- Asymmetry doesn't prove malice. An asymmetry of 1.4 is statistically loud, but the cause might be routing through asymmetric LP pools rather than active manipulation. The toolkit measures behavior, not intent.

- The methodology will be wrong about something. That's why it's open-source. Submit issues, send PRs, propose alternative weights, replicate against a broker we haven't covered. Methodology v2 will incorporate the strongest critiques.

Roadmap

| Version | What ships | Timeline |

|---|---|---|

| v1.0 | Methodology + Python toolkit + public spreadsheet (you are here) | Q2 2026 |

| v1.1 | FIX log parser improvements; Last-look detection from heuristic patterns when explicit timestamps are absent | Q3 2026 |

| v1.2 | AI-pattern flagging dimension (sixth dimension): detection rubric for adaptive throttle behavior | Q3 2026 |

| v2.0 | Live public dashboard at beqi.bjftradinggroup.com; aggregate distributions across submissions; methodology refresh based on community feedback |

Q4 2026 |

Frequently asked questions

No. BEQI is an open methodology and a public dataset of anonymized audits. We deliberately do not publish broker leaderboards from this dataset because (a) it would compromise the integrity of the data — brokers would optimize for the audit signal rather than for execution quality, and (b) it's a legal minefield. Anyone who wants to publish a leaderboard is free to do so using the open data; we publish the tool, the methodology, and the measurements.

Most broker review sites score on UX, withdrawal speed, customer support, and spread advertising — surface variables. None of them measure matching latency, slippage asymmetry, or last-look hold. BEQI fills that specific gap: it's an execution-quality measurement, not a general broker review.

The toolkit handles this gracefully. Dimensions that can't be measured from your input are reported as null rather than zero, and the composite BEQI is computed from the dimensions that ARE measurable, with weights renormalized. The output JSON makes it explicit which dimensions were skipped and why, so the result is honest about its coverage.

Demo audits are useful but not equivalent. Many brokers route demo accounts through the institutional matching engine (no last-look, no spread widening, fast latency) while live accounts go through the retail engine. Always audit a live account with real (small) volume to measure real behavior. The toolkit will accept demo data and flag the result as “demo” in the JSON output.

200 fills is the practical minimum for a usable signal. 500–1,000 fills is where confidence intervals tighten enough to call tier classifications confidently. Above 5,000 fills, the audit becomes a research-grade measurement. You can reach 200 fills in a week by trading 30–40 small market orders per day (no need to do this with significant capital).

The methodology is forex-specific in its current form — spread widening and last-look are forex concepts, not exchange concepts. A crypto-equivalent index would need different dimensions (e.g., orderbook depth at fill price, market-impact slippage, withdrawal latency). We may publish a crypto-CEQI index later. For now, see our crypto arbitrage methodology — different metrics, same open-data philosophy. The forex toolkit will not produce meaningful results on crypto data.

Tightly. SharpTrader Optimizer backtests strategies with configurable execution time and tick-resolved slippage — in other words, the variables BEQI measures on your live broker. If your broker scores Tier 3 (matching latency 80–200 ms), you should configure the Optimizer with execution time = 100–200 ms before deciding the strategy is viable. BEQI measures the broker; Optimizer simulates the strategy under that broker's real conditions. Together they tell you whether to trade. The same logic applies if you're trading forex arbitrage strategies more broadly — measure the broker first, then size the strategy to its actual execution profile.

Fair question. We have a commercial interest in arbitrage and execution-aware backtesting being taken seriously, which BEQI obviously serves. We do not have a commercial interest in any particular broker scoring well or badly — SharpTrader Pro supports brokers across all four tiers, and our customers self-select the venue. We're publishing the methodology open-source specifically so you don't have to take our word: the code is auditable, the measurements are reproducible, and any researcher can replicate or contradict our internal numbers.

Five ways: (1) run the toolkit on your own broker and submit the JSON to the public spreadsheet; (2) open issues on the GitHub repo when the parser breaks on your statement format; (3) submit pull requests for new statement-format parsers; (4) propose methodology refinements via discussion threads; (5) write up your own analysis using the open dataset and link back — we'll feature strong external write-ups in our newsletter.

Submissions to the public spreadsheet are anonymous by default — the broker is optional, account ID is never collected, and the JSON output contains no identifying information. We have not heard of brokers retaliating against execution-quality measurement (it would be hard to do without admitting the audit was accurate), but the anonymity option exists specifically to remove that risk.

Audit your broker today

30 minutes. Open-source code. Public methodology. Anonymous submission. The benchmark only exists if traders contribute — start with your own.

English

English Deutsch

Deutsch 日本語

日本語 العربية

العربية 한국어

한국어 Español

Español Português

Português Indonesia

Indonesia 中文

中文